This article explores the testing tools and techniques you can use at each stage of your software development when working with microservices.

Before jumping into the specific tools, we advise to read the previous blog from this series – Testing Microservices – for a broader introduction into the topic and a detailed overview of the types of tests that may be required.

These articles summarize a webinar on the topic, hosted by Piotr Litwiński, Principal Engineer at Zartis. You can watch the full webinar here.

Why do we test microservices?

We would say, first of all, for peace of mind, but in practice, testing microservices can help us eliminate many problems by avoiding a domino effect.

Microservices are usually developed with business-oriented APIs to encapsulate a core business capability. The principle of loose coupling helps eliminate or minimize dependencies between services and their consumers. However, the biggest issue in a distributed environment is that you have a lot of moving parts within the systems and subsystems. It’s constantly changing, and a lot of services are interacting with each other simultaneously.

Imagine that you have 10 teams, constantly working on various aspects of your systems and subsystems, deploying multiple times a day. Without proper testing, you might experience some side effects because you weren’t aware of the changes made by other team members. This gets very complex and in case of a mistake, the rollbacks are usually quite substantial. Due to the dependency tree, taking out one of those microservices from the system might be difficult, as that usually implies you need to also revert other deployments which are dependent on this microservice.

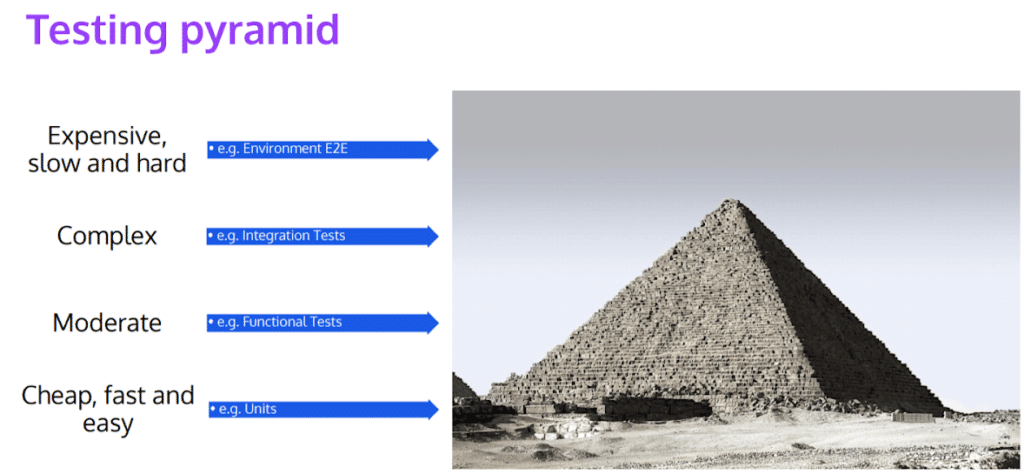

The Testing Pyramid and Test Types

To keep on top of all changes, there are different tests you can run at different stages at the software development life cycle (SDLC). These can be grouped into Build tests, Environmental tests, and System tests.

Build tests usually include Unit tests, Contract tests, Functional or Integration tests. The goal here is to eliminate issues in the build environment before they are pushed out to anywhere.

To run environmental tests you don’t need a full environment, but you need more than one service or system component to test. To that end, you can use simulators to test services running at the same time. These tests usually include Deployed tests and End-to-end (E2E) testing.

For system tests, there are two test groups to be considered, which are namely Performance Tests and Resilience/Availability tests. Using these tests, you can test your whole system or part of a subsystem depending on how you want to slice your tests.

Tools For Testing Microservices

Like there are different tests for different phases of the SDLC, there are also different tools you can use to test your software at these phases. Here, we will cover the Developer Tools and DevOps Tools which refer to the phases before and after deploying a microservice.

Developer Tools for Microservices Testing

As the name suggests, the tools and techniques below will show you the options software developers have to verify the correctness of their code before sending it out to the DevOps team to publish it.

In-process test tools:

- Stubs & mocks: They’re well established patterns to either implement a replacement, or use existing libraries that are available on the market to replace something that you will usually inject into your service, or class.

- Coarse grained unit tests: Here, instead of having just one class or one unit of code, you’re making this unit way bigger. For instance, you can use Test Server nuget package to spin up almost the whole thing in memory.

- Well defined contracts: Contracts will allow you to have more modularity and test things in isolation because each contract will be well defined. It also helps develop software in a better way because it pushes you to think about a lot of things even before starting the foundation, and you can catch any potential issues in the design much earlier.

- In-memory testing frameworks: These tests are more extensive than coarse-grained unit tests, but again, they allow you to test a lot of things within the same processor. You can always debug it from, for instance, Visual Studio. Additionally, some ORM frameworks (e.g. Entity Framework) allow you to swell for in-memory implementation using tools like Entity Framework In-Memory nuget package or SQLite set to work in-memory mode. Moreover, it can also run in memory mode on the identity server.

Machine level test tools:

These tests will still run on your machine, and you can still debug stuff but it usually requires you to have something running in your background, like a mock server, so that you can talk to that thing.

- Contract verification: The idea is that you’re recording calls by redirecting the traffic via a test setup while running a set of tests. When you’re running a set of tests, it records it for you. Packed is a really nice framework that allows you to verify the contracts are okay.

- Webdriver & (Headless) browsers: If your contracts are verified, you can use WebDriver and headless browser to spin up the whole server, and mock the API’s that you’re usually talking to.You can write tests which clicks on real elements to see how they behave. And to make it faster, you can run these tests in headless mode.

- Local counterparts: Using a local SQL database, a Cosmos DB emulator, a Jason or mock server, you can easily distributable counterparts.

- Easily distributable counterparts: You can use MySQL database on Docker or use tools like Amazon fargate, or Azure ACI to spin up a Docker container in the cloud.

DevOps Tools for Microservices Testing

DevOps teams are usually not involved in testing directly, but there are a lot of things they can do to actually make sure that services are working as expected. These are tools or techniques that can be used once the services are deployed to a given environment.

Running E2E/Multi service integration tests on a given environment would be one of the most common techniques.Once you deploy your tests on that testing environment, you can set automated tests using Specflow, or something similar, that checks the basic flows.

Running app diagnostics at start-up is another very useful technique. It is a piece of code that checks if APIs, and databases for schemas, incorrect versions etc. It checks whether everything goes wrong at application start-up, or if it is still working. This way, you can see issues in the pipeline before you deploy a feature or just when you’re deploying it.

Using Metrics and Dashboards is a very useful tool if set right. Whenever you deploy something, you should look at logs, and certain dashboards, for instance, if your server throughput is still as high, or did it go down after deployment.

Setting the right alert system is the key to utilizing dashboards efficiently. Instead of looking at 20 dashboards, which is not happening in reality anyways, you may have one or two overarching ones and get notifications if there are any anomalies. You can set notifications, emails, Slack messages, or any chat message tool reporting problems when the new service is deployed.

It’s important that you don’t have metrics and logs only on your production environments, but on all environments, so you can catch issues as fast as possible, as early as possible.

Final Thoughts

You may need to spend some time and resources on getting your team onboard and aligning your development and testing approach. However, in the log run, you will have a team that is fully aligned in vision and development approach.

Testing at lower levels has many other advantages. You can have huge savings in time and regression, you can catch bugs earlier but, most importantly, you will gain the confidence to refactor or expand a system without losing any sleep over potential issues that may arise. Resolving issues before it reaches the customer will hugely increase your customer’s and team’s product confidence, and set your business up for success.